There are some troubles in paradise for Tesla as the US federal agency governing road safety has announced an investigation into the company and its self-driving car claims.

The National Highway Traffic Safety Administration has identified 11 crashes of ‘particular concern,’ where the vehicle was operating in an autopilot mode prior to the collision and the driver was sometimes completely distracted from the task of driving.

Tesla’s vehicles boast ‘Full-Self-Driving’ (FSD), but current regulations do not allow for fully autonomous vehicles on the road.

IDTechEx’s in-depth analysis has tried to understand better this peculiar situation by diving into the details with its latest report “Autonomous Cars, Robotaxis & Sensors 2022–2042”.

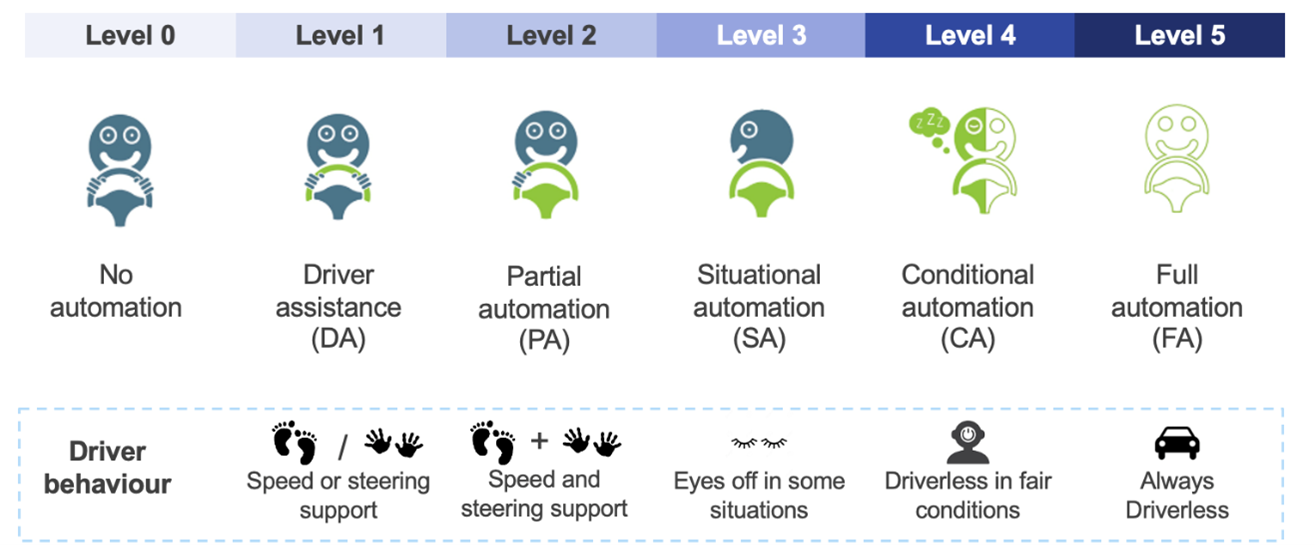

Currently, the Society of Automotive Engineers has agreed on six levels of automation.

Level 1 vehicles simply need to automate one part of driving, such as maintaining a set speed, which can be achieved with cruise control. Level 2 vehicles must be able to assist with two aspects of driving, this is typically satisfied with adaptive cruise control and lane-keep assist systems.

It is not until we get to level 4 that we can start to get closer to the futuristic idea of self-driving cars. According to the IDTechEx’s report, Tesla Autopilot is only a level 2 system. Tesla is not a self-driving car, and a human always needs to pay attention to the road.

However, Tesla Autopilot is still a very advanced system. In fact, according to Consumer Reports it is the second-best Advanced Driver Assistance System on the market, with GM’s SuperCruise taking the top spot.

The abilities of Level 2 are shown by videos of the Tesla system in action where it handles traffic very well and can follow the navigator. But in the end, it is the human that is driving the car and not the other way around. In videos where the driver is asleep or has left the driver’s seat, they are abusing the system and breaking the law.

IDTechEx has pointed out that no vehicle currently on sale has anything more than driver assistance features as the driver is always in control.

The report crucially points out that the problem for Tesla is not about the technology itself, but it is about how it has been marketed. The problem ranges from the ‘Full Self Driving’ labelling to the way that CEO Elon Musk describes the system.

Musk has been talking about the ‘Full Self Driving’ upgrade on Tesla vehicles since 2018, and with multiple revisions, upgrades, and hype cycles since then: some confused customers are lured into thinking they have bought a self-driving autonomous vehicle when in reality such technology does not even exist.

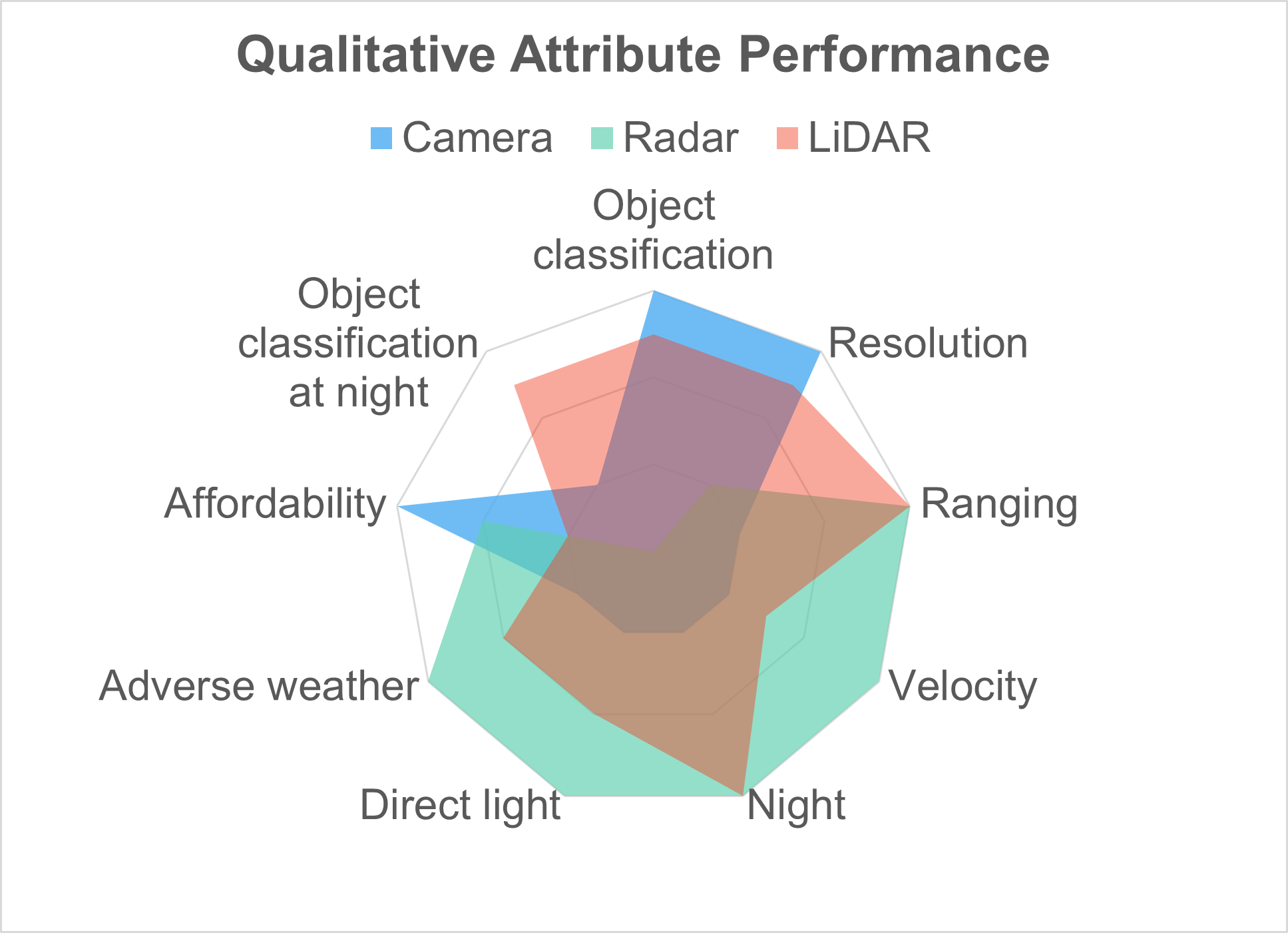

While other manufacturers rely heavily on cameras, radars, and LiDARs, Tesla is pursuing a camera-only approach and has even gone as far as removing the radar from Model 3 and Model Y vehicles.

The problem however is that cameras do not have the same capabilities as radar. Although cameras can infer range and velocity through AI, radars can measure this information intrinsically and are almost completely unaffected by poor weather, darkness, and direct sunlight, which are present challenges for a camera.

Tesla can certainly exceed human driving performance with a camera-only suite, but IDTechEx believes they have limited their potential by disregarding radar and have put themselves at a competitive disadvantage.

Radars and LiDARs can become very important at night when the camera systems are performing far below their peak potential. This is crucial for these systems as most pedestrian fatalities happen at night. The government agency also noted that most of the incidents they are investigating involving Tesla’s autopilot systems happened in darker conditions.

Tesla Autopilot and FSD are still among the best systems on the market. IDTechEx said that it is unlikely that the US government and the NHTSA will find anything untoward or dangerous within these systems. However, the problem lies entirely with the misinterpretation of the system’s capabilities.

There needs to be a clear message that these are not full self-driving vehicles, and the driver is very much responsible for driving the vehicle as it could cost other people’s lives.

Discussion about this post